In a previous blog, I wrote about applying lean to artifact-centric business processes. The main idea is that to apply lean to software identify the process blocks in value stream maps with state transition of the artifacts and then the standard lean techniques of applying measures and identifying waste follows. After some further reflection, and the dialog I have had with Troy, I realized I was forgetting something.

The main idea is correct, but there is a further consideration. The handling of the analytics of lead times is a different for software than a repeatable process such as manufacturing. For those not familiar with the term, an example of ‘lead time’ is the measurement of the amount of time things are in a backlog. A ‘thing’ could be a task waiting to be addressed or equivalently a work product awaiting transition. Sounds simple enough. Measuring lead time applies to a population of things, those things in the backlog. Applying a measure to a population is ‘descriptive statistics’. So we are left with the choice of statistic. I am sure most of you are thinking, why not the ‘average’. The use of the ‘average’ or mean may be intuitive, but is often not a good choice. It is a good choice for for describing the time to complete of a population of repetitive tasks such as manufacturing. That is because the distributions of times is a narrow Gaussian (bell-shaped) whose peak is the mean. So the mean is a good representation of the distribution.

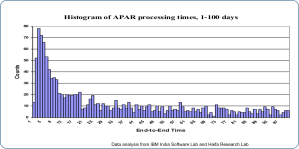

The problem is that the lead teams is not normal. In fact at IBM, we looked at the lead times for over 20 different teams doing code fixes to address problem reports. The data distribution looks like this. It is far from Gaussian.

The mean of this distribution doesn’t tell us much. But it is this lead time distribution we want to improve. I have some ideas on what statistic to choose, but no firm answer. But for this blog I will post the question and see if I get some suggestions.

[…] a previous post, I wrote about lean analytics. I have over the last few weeks written a long article […]